The Scheduler will compile the DAG and fill the information generated with the variables received from previous tasks, we can see the dictionary received from the list iteration in the Rendered Template section: Let's take, for instance, the foph DAG, that receives different CSV files and generates a Task Instance for each file, looping through a relation of tables (list of dicts). XCom and Rendered Template define the behavior of a task, it's how Airflow controls the relation between tasks and define which information was received from previous tasks and which will be shared forward to the next ones. As the logging module is configured in Colombia DAG ( check_last_update task) to inform the last update date of the EpiGraphHub data, the stdout can be found in Log section: In the logs we will be able to see each step Airflow ran to execute the task, the command line used to run, errors if occurred, dependencies with other tasks, and other details, such as variables consumed and returned by the Operators. A DAG also can be manually triggered in the GUI or via Command Line, in the Graph tab of the Webserver it is possible to see the run in realtime when the DAG is triggered:Įach task produces its own log every run, the log of a task can be accessed by clicking a task in the graph:

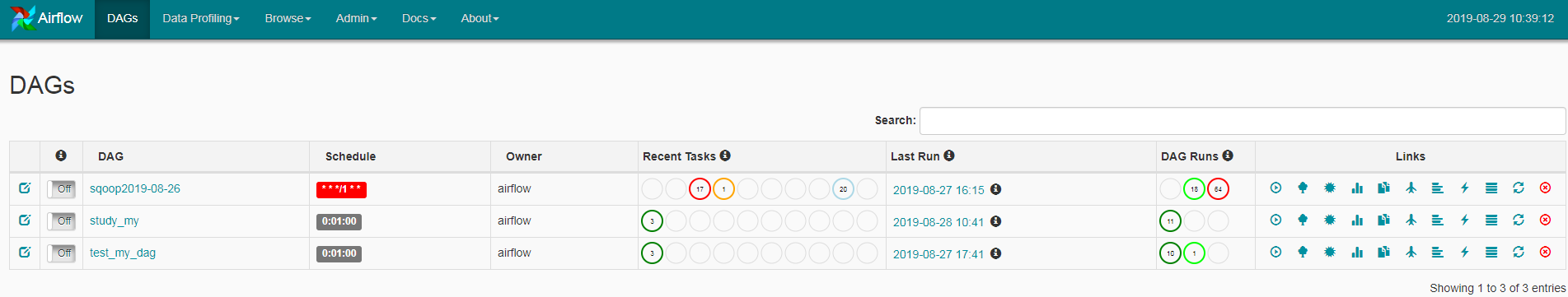

Check the Official Documentation for more schedule intervals details. Some accepted intervals are Also as CRON patterns: 0 0 1 * * (monthly at midnight of day 1), 30 18 * 6-12 MON-FRI (at 18:30 on every day-of-week from Monday through Friday in every month from June through December). When a DAG is created, an interval between the runs is defined in the schedule_interval variable. configure PostgreSQL, AWS, Azure, MongoDB, Google Cloud (and much, much more) external connections Īs the Airflow Scheduler constantly compiles DAGs into the internal Airflow's database, broken DAGs, missing scheduler and other critical errors will appear in the GUI landing page:Ī DAG will be triggered as defined in it's code. follow the logs of a task run individually manually trigger DAGs, pause or delete them easily find important information about the DAG and tasks runs The Webserver, although it's not necessary to the Airflow's operation, will work as an endpoint for the user to manage and follow the pipeline for each DAG. The Webserver's first page is the login page, the Users are defined in airflow.cfg and created during the Docker build of EpiGraphHub, more information about user configuration can be found in Airflow's Official Documentation.

The Airflow Webserver is the GUI of Airflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed